KETOS CEO Meena Sankaran recently sat down with Gary Wong (Principal, Global Water Industry, OSIsoft) and discussed challenges and opportunities related to water analytics in the year ahead.

Topics were wide-ranging and included issues related to solution awareness, data access, and data security.

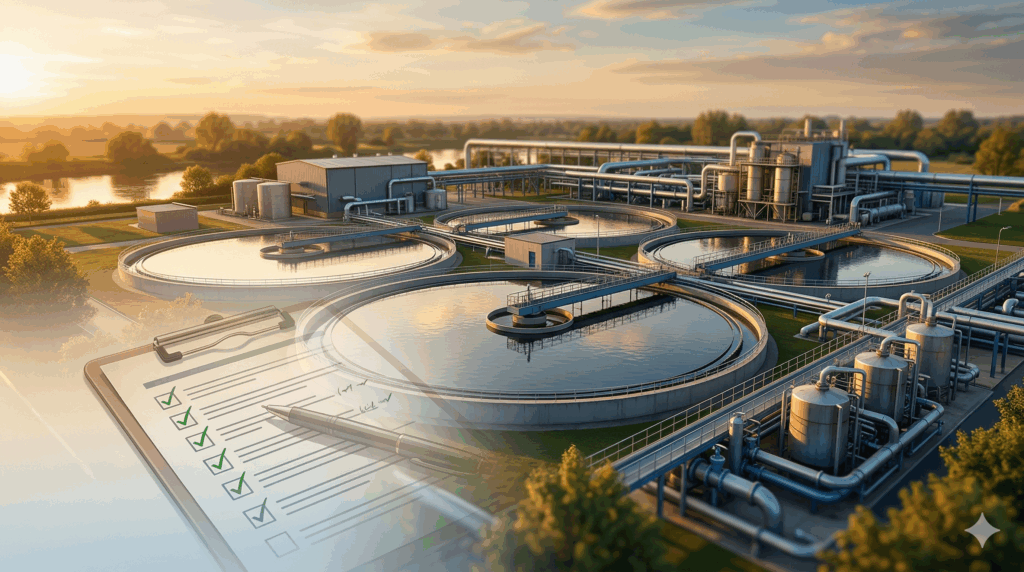

Water Analytics Solution Awareness

One of the biggest takeaways, as pointed out by Gary Wong, is that often, water operators aren’t aware that solutions such as KETOS exist, or that those solutions are simple, interoperable, and easy to implement. Operators often don’t realize that water analytics solutions can help them:

- Automatically collect data on a variety of water quality parameters

- Aggregate data in a centralized repository and/or connect with other systems

- Automate data analysis and threshold-based alerts

- Make data-driven decisions in real-time

- Leverage AI to predict usage patterns and maintenance needs

Many operators don’t seek out these types of solutions because the assumption is that they require significant investments of time and money – and require significant expertise to implement, configure, and maintain over time.

The fact is that solutions like the KETOS Smart Water Intelligence Platform don’t require significant investments and empower operators to make data-driven decisions about water analytics and quality in real-time and enable data-driven operation process controls.

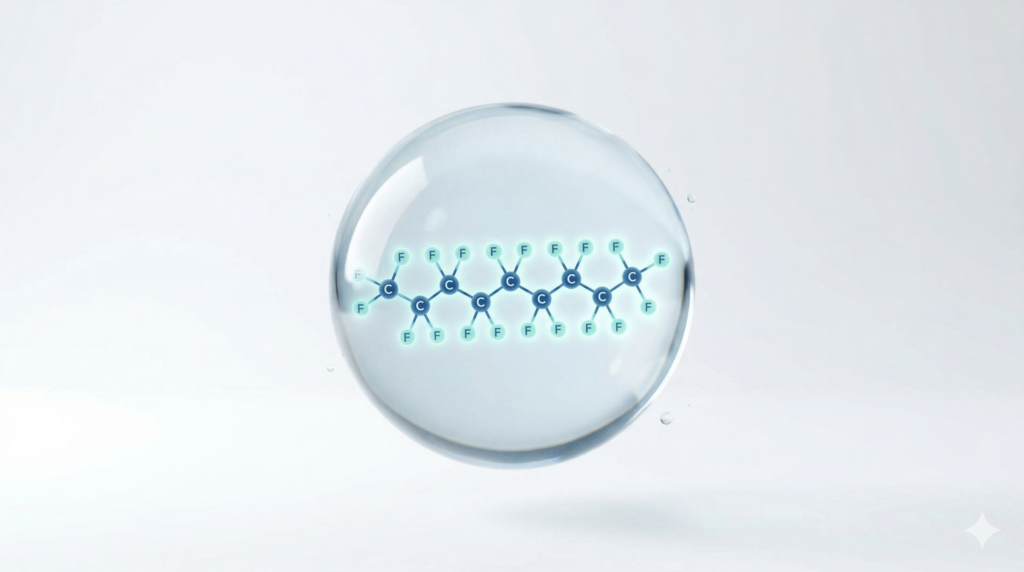

Data Security and the Cloud

While great strides have been made to increase security in the cloud, some industries and companies still have reservations about storing data in the cloud. For these folks, concerns about data security, no doubt, will only be exacerbated after the February 2021 cyber attack on a water treatment plant in Florida. While the intrusion into the facility’s system could have been easily averted, the very idea of a cyber attack or hack makes users wary of connecting to technology beyond their premises.

However, as Gary points out in the webinar, the cloud is typically safe and used universally by most people every day. While a majority of us wouldn’t think twice about pulling out a credit card to pay for a meal at a restaurant, it’s likely safer for information to interact online via the cloud due to the number of protocols in place that protect the user.

The reality is that the cloud vendors offer significant security and compliance controls for water analytics. Data is encrypted, secured, backed-up and retained in a way that ensures the security and retention of data over time. In addition, most software solutions are built in such a way that data itself is anonymized or structured in a way that greatly reduces a hacker’s to do harm even in the event of a data breach.

Once again, it will be incumbent on technology companies to educate their customers on data security and how platforms are protecting them.

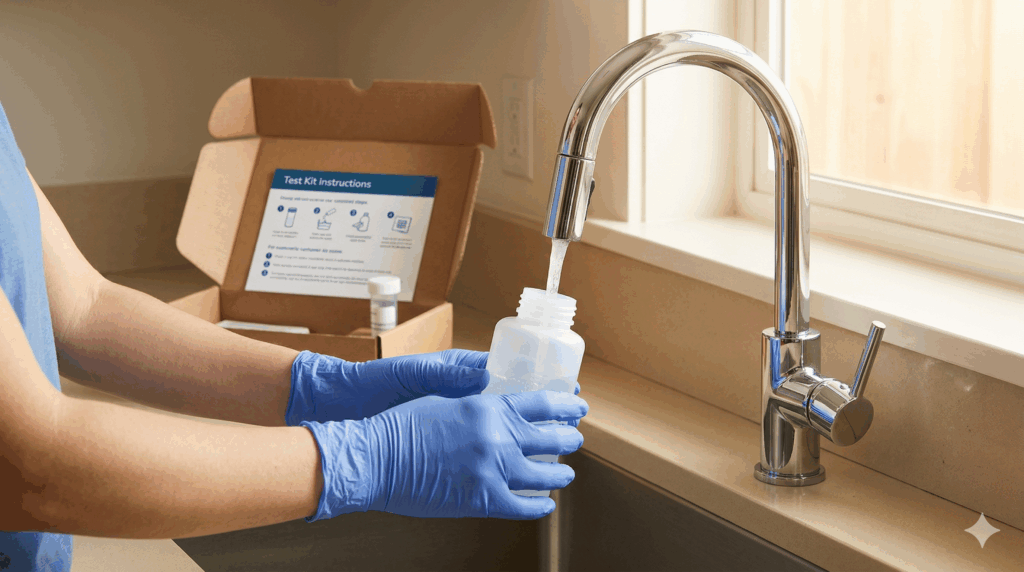

Need real-time water quality monitoring?

KETOS SHIELD continuously monitors 30+ parameters — replacing manual testing with automated, lab-accurate data.

Schedule a Demo →Data Quality and Data Accessibility for Water Analytics

Sending data to the cloud is one thing. Accessing the data once it is collected is an entirely different issue. It’s a real problem. OSiSoft recently surveyed around 200 utilities and found that, shockingly, 61% of those surveyed had issues accessing their own data.

Most utilities are data rich, but information poor.

The amount of data collected can be staggering and it often comes from different sources at different times and often under data types or output files. The information may come in the form of an Excel spreadsheet or a PDF. It may be on a dashboard here and a downloaded file there. While water operations may be flush with data points across their entire infrastructure, they’re information poor in terms of being able to actually make sense of it. As a result, 99.5% of collected data never gets used or analyzed.

The key is for water operators to focus on KPIs that can help them make informed decisions. By doing so, it will ensure that the right data is actually being collected, allowing operators to cut out much of the noise (irrelevant data) and reduce information overload.

A big challenge in 2021 will be helping water operators tie up those loose ends and implement technology that can make sense of data in a way that helps with operational decision making – without having to rely on data scientists to collect, aggregate, analyze, and make sense of these disparate data sources.

Finally, architecting this data in a meaningful way is critical if an organization wants to take advantage of machine learning and AI. These technologies are here to stay and will continue to gain importance and prominence. However, the insight they ultimately provide is only as good as the data collected. Helping them “learn” will help operators take advantage of predictive and prescriptive analytics.

The Haves and Have Nots of Remote Monitoring

COVID-19 has underscored the need for remote accessibility of data – and not just for overall ease of use and convenience. Over the last 12 months, remote data access has been shown to be important from a health and safety standpoint. During the pandemic, the haves and have-nots of data accessibility were laid bare. There were organizations and utilities that had remote capabilities (for data access and water monitoring) in place, and were able to protect operators. However, many were left to flounder, working to find ways to socially distance and even sometimes requiring workers to remain mobilized for a week or two onsite, having to work with systems that required more manual interventions.

As the pandemic drags on, it’s likely that more utilities and organizations will be looking for ways to automate and remotely monitor their services. 2021 will likely see an uptick in interest in building more resilient systems that will have knock-on effects beyond COVID-19.

If you would like to watch webinar in its entirety, you can catch it here: